Benjamin Todd on what the effective altruism community most needs (80k team chat #4)

In the last '80k team chat' with Ben Todd and Arden Koehler, we discussed what effective altruism is and isn't, and how to argue for it. In this episode we turn now to what the effective altruism comm...

12 Nov 20201h 25min

#87 – Russ Roberts on whether it's more effective to help strangers, or people you know

If you want to make the world a better place, would it be better to help your niece with her SATs, or try to join the State Department to lower the risk that the US and China go to war? People involve...

3 Nov 20201h 49min

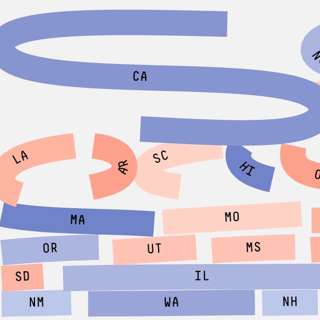

How much does a vote matter? (Article)

Today’s release is the latest in our series of audio versions of our articles.In this one — How much does a vote matter? — I investigate the two key things that determine the impact of your vote: • ...

29 Okt 202031min

#86 – Hilary Greaves on Pascal's mugging, strong longtermism, and whether existing can be good for us

Had World War 1 never happened, you might never have existed. It’s very unlikely that the exact chain of events that led to your conception would have happened otherwise — so perhaps you wouldn't have...

21 Okt 20202h 24min

Benjamin Todd on the core of effective altruism and how to argue for it (80k team chat #3)

Today’s episode is the latest conversation between Arden Koehler, and our CEO, Ben Todd. Ben’s been thinking a lot about effective altruism recently, including what it really is, how it's framed, an...

22 Sep 20201h 24min

Ideas for high impact careers beyond our priority paths (Article)

Today’s release is the latest in our series of audio versions of our articles. In this one, we go through some more career options beyond our priority paths that seem promising to us for positively ...

7 Sep 202027min

Benjamin Todd on varieties of longtermism and things 80,000 Hours might be getting wrong (80k team chat #2)

Today’s bonus episode is a conversation between Arden Koehler, and our CEO, Ben Todd. Ben’s been doing a bunch of research recently, and we thought it’d be interesting to hear about how he’s current...

1 Sep 202057min

Global issues beyond 80,000 Hours’ current priorities (Article)

Today’s release is the latest in our series of audio versions of our articles. In this one, we go through 30 global issues beyond the ones we usually prioritize most highly in our work, and that you...

28 Aug 202032min